Statistical Machine Learning

This course complements the Master's curriculum with models, methods and concepts mainly from the realm of machine learning. We will study intermediate to advanced models (e.g. trees, neural networks, ensembles), discuss alternative feature representations (adaptive basis functions) and strategies to ensure generalization (e.g. validation, regularization).

Participants are required to bring working knowledge in R or the willingness and effort to obtain that on the side. If you feel not comfortable with R, you may join the Bachelor module "Data Science I" in the first month of the winter semester to catch up (no credit).

Course Structure

This is a hands-on course: A weekly lecture is complemented by a weekly R Tutorial that we use to discuss, implement and practice the current topic.

Content

The course content is designed to complement the mandatory classes in empirical economics:

- We start with empirical loss minimization and introduce the notion of variance.

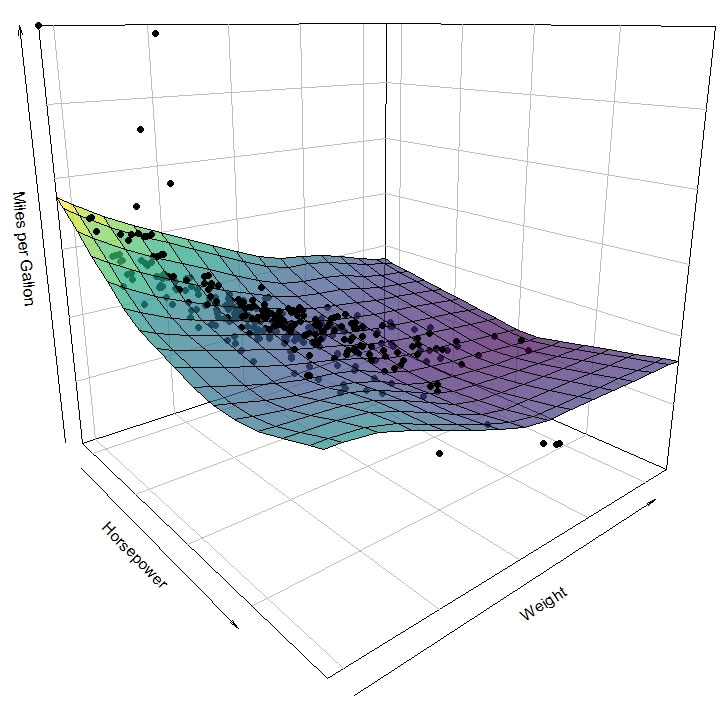

- Models beyond the linear model are introduced: tree models, adaptive basis functions, neural networks. We have some flexibility here and may include other models of interest.

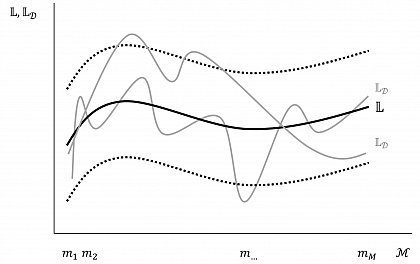

- We develop the notion of generalization in machine learning based on the B-V tradeoff and juxtapose generalization it with the econometrics/causal inference view that you are familiar with. Different strategies to investigate or achieve generalization (e.g. validation, information criteria, regularization) will be introduced.

- Ensemble methods that aim to deal with B-V problems are introduced.

- We reconcile the desire for causal inference in economics (and other fields) with the powerful, predictive models taught in this class under the umbrella of Double Machine Learning.

- Depending on time, we may look at strategies to deal with high-dimensional data via e.g. principle component analysis or variable clustering.

| Week 1 | Empirical Loss Minimization |

| Week 2 | Tree models |

| Week 3 | Adaptive Basis Functions |

| Week 4 | Neural Networks |

| Week 5 | Neural Networks |

| Week 6 | Generalization |

| Week 7 | Generalization |

| Week 8 | Model Selection |

| Week 9 | Ensembles: Bagging |

| Week 10 | Ensembles: Boosting |

| Week 11 | Double Machine Learning |

| Week 12 | Double Machine Learning |

| Week 13 | (PCA) |

| Week 14 | (Clustering) |

| Week 15 | Lab Sessions |

Coursework

Currently, both a project and a presentation are due at the end of the semester. Details will be announced in class.